Filter News

Area of Research

- (-) National Security (52)

- (-) Supercomputing (75)

- Advanced Manufacturing (4)

- Biology and Environment (21)

- Clean Energy (28)

- Computational Biology (1)

- Computer Science (3)

- Fuel Cycle Science and Technology (1)

- Fusion and Fission (4)

- Isotopes (1)

- Materials (57)

- Materials for Computing (5)

- Neutron Science (76)

- Nuclear Science and Technology (6)

- Quantum information Science (1)

News Type

News Topics

- (-) Artificial Intelligence (42)

- (-) Cybersecurity (21)

- (-) Exascale Computing (21)

- (-) Machine Learning (21)

- (-) National Security (35)

- (-) Neutron Science (15)

- (-) Physics (9)

- 3-D Printing/Advanced Manufacturing (7)

- Advanced Reactors (1)

- Big Data (17)

- Bioenergy (11)

- Biology (13)

- Biomedical (12)

- Biotechnology (3)

- Buildings (3)

- Chemical Sciences (4)

- Climate Change (18)

- Computer Science (84)

- Coronavirus (13)

- Decarbonization (5)

- Energy Storage (6)

- Environment (20)

- Frontier (26)

- Fusion (1)

- Grid (8)

- High-Performance Computing (35)

- Isotopes (2)

- Materials (13)

- Materials Science (15)

- Mathematics (1)

- Microscopy (7)

- Molten Salt (1)

- Nanotechnology (10)

- Net Zero (1)

- Nuclear Energy (6)

- Partnerships (5)

- Quantum Computing (15)

- Quantum Science (21)

- Security (13)

- Simulation (12)

- Software (1)

- Space Exploration (2)

- Summit (36)

- Sustainable Energy (9)

- Transportation (6)

Media Contacts

Outside the high-performance computing, or HPC, community, exascale may seem more like fodder for science fiction than a powerful tool for scientific research. Yet, when seen through the lens of real-world applications, exascale computing goes from ethereal concept to tangible reality with exceptional benefits.

Mike Huettel is a cyber technical professional. He also recently completed the 6-month Cyber Warfare Technician course for the United States Army, where he learned technical and tactical proficiency leadership in operations throughout the cyber domain.

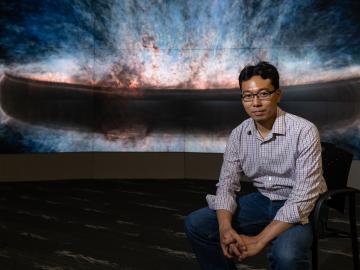

Cody Lloyd became a nuclear engineer because of his interest in the Manhattan Project, the United States’ mission to advance nuclear science to end World War II. As a research associate in nuclear forensics at ORNL, Lloyd now teaches computers to interpret data from imagery of nuclear weapons tests from the 1950s and early 1960s, bringing his childhood fascination into his career

ORNL hosted its fourth Artificial Intelligence for Robust Engineering and Science, or AIRES, workshop from April 18-20. Over 100 attendees from government, academia and industry convened to identify research challenges and investment areas, carving the future of the discipline.

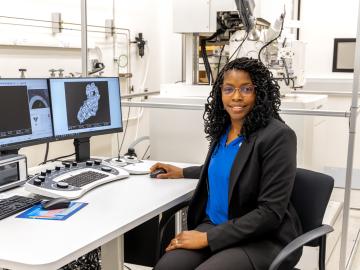

After completing a bachelor’s degree in biology, Toya Beiswenger didn’t intend to go into forensics. But almost two decades later, the nuclear security scientist at ORNL has found a way to appreciate the art of nuclear forensics.

Wildfires have shaped the environment for millennia, but they are increasing in frequency, range and intensity in response to a hotter climate. The phenomenon is being incorporated into high-resolution simulations of the Earth’s climate by scientists at the Department of Energy’s Oak Ridge National Laboratory, with a mission to better understand and predict environmental change.

When geoinformatics engineering researchers at the Department of Energy’s Oak Ridge National Laboratory wanted to better understand changes in land areas and points of interest around the world, they turned to the locals — their data, at least.

With the world’s first exascale supercomputer now fully open for scientific business, researchers can thank the early users who helped get the machine up to speed.

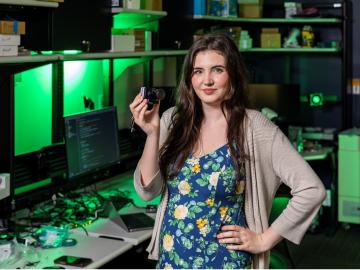

Tristen Mullins enjoys the hidden side of computers. As a signals processing engineer for ORNL, she tries to uncover information hidden in components used on the nation’s power grid — information that may be susceptible to cyberattacks.

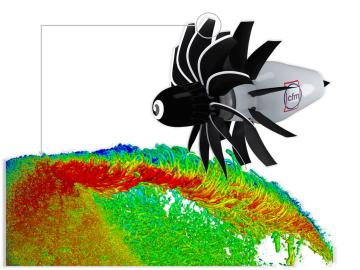

To support the development of a revolutionary new open fan engine architecture for the future of flight, GE Aerospace has run simulations using the world’s fastest supercomputer capable of crunching data in excess of exascale speed, or more than a quintillion calculations per second.