We demonstrated feasibility of embedding functional programming (FP) computational cores written in Scala into programs written in three traditional languages used to implement high performance computing (HPC) applications: Fortran, C, and C++. Measured the performance and overhead of pilot hybrid FP programs.

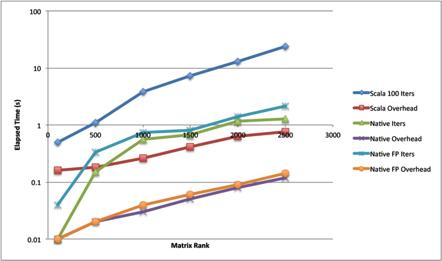

To demonstrate the feasibility of constructing hybrid FP applications, we have developed five computational cores in Scala and embedded them into programs written in the three traditional languages for implementing HPC applications: Fortran, C, and C++. Because Scala programs are executed on a JVM, we embedded a JVM in Fortran, C, and C++ demonstration frame programs using the C-language JNI. We added calls to each Scala FP core within each demonstration frame program. Because the JNI is a C language API, invoking the Scala FP cores from C and C++ was relatively easy. Because Fortran and Scala use substantially different in-memory data representations and calling conventions, we developed an automated tool for generating wrapper functions that handle conversions between the two languages' data representations. We used a five-point stencil FP core to measure the performance and overhead of a hybrid FP program to an iterative implementation of the same stencil operation and an FP implementation using a traditional HPC language. We found the hybrid FP implementation had a substantial performance gap with the iterative and traditional FP implementations, and our measurements suggested that the overhead of constructing a JVM and converting data representations for the Scala FP core were primary contributors to this gap. In this sense, our hybrid FP programs are similar to GPU-accelerated programs, in that much work must be done in GPU kernels to amortize the cost of initializing the GPU device and transferring data to and from the GPU to the frame program.

Funding for this work was provided by the Office of Advanced Scientific Computing Research, U.S. Department of Energy. The work was performed at Oak Ridge National Laboratory (ORNL).