Deep neural networks—a form of artificial intelligence used in everything from speech recognition to image identification to self-driving cars—have demonstrated mastery of tasks once thought uniquely human.

Now, researchers are eager to apply this computational technique—commonly referred to as deep learning—to some of science’s most persistent mysteries. But because scientific data often looks much different from the data used for animal photos and speech, developing the right artificial neural network can feel like an impossible guessing game for nonexperts. To expand the benefits of deep learning for science, researchers need new tools to build high-performing neural networks that don’t require specialized knowledge.

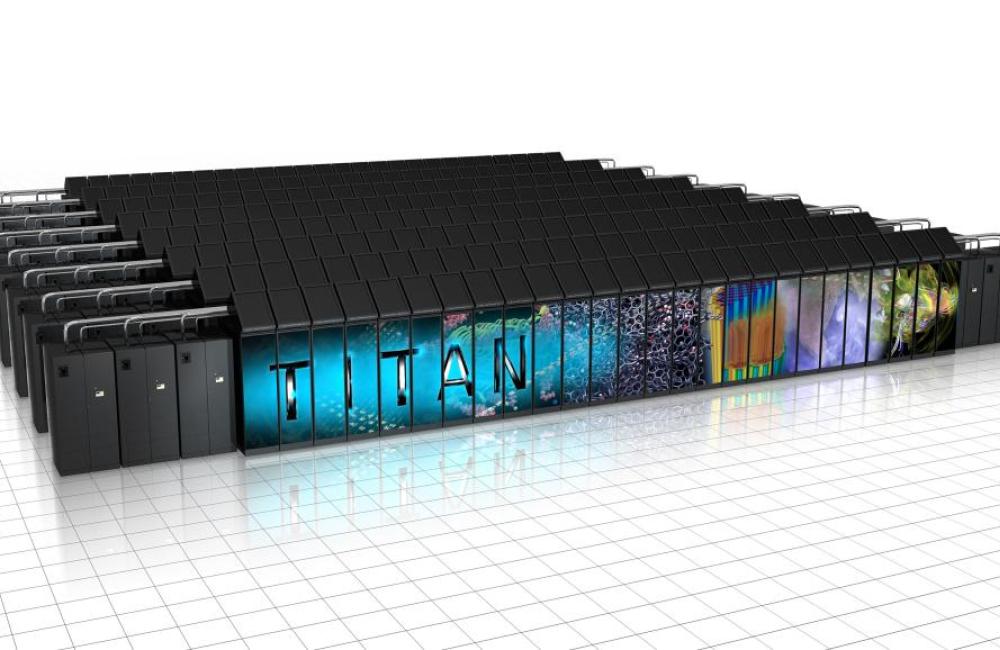

Using ORNL’s Titan supercomputer, a research team led by ORNL’s Robert Patton has developed a suite of algorithms capable of generating custom neural networks that match or exceed the performance of handcrafted artificial intelligence systems. Better yet, by leveraging the GPU computing power of the Cray XK7 Titan, these auto-generated networks can be produced quickly, in a matter of hours, as opposed to months using conventional methods.

The research team’s suite includes MENNDL, RAvENNA, and EONS—codes for evolving and fine-tuning neural networks. Scaled across Titan’s 18,000 GPUs, the algorithms can test and train thousands of potential networks for a science problem simultaneously, dropping poor performers and averaging high performers until an optimal network emerges. The process eliminates much of the time-intensive, trial-and-error tuning traditionally required of machine- learning experts.

“There’s no clear set of instructions scientists can follow to tweak networks to work for their problem,” said research scientist Steven Young, a member of ORNL’s Nature Inspired Machine Learning team. “With these tools, they no longer have to worry about designing a network. Instead, the algorithm can quickly do that for them, while they focus on their data and ensuring the problem is well-posed.”

Neural networks consist of stacked layers of computational units that process many examples to identify patterns in data and draw conclusions. Although many parameters of a neural network are determined during the training process, initial model configurations must be set manually. These starting points, known as hyperparameters, include variables like the order, type, and number of layers in a network, and they can be the key to efficiently applying deep learning to an unusual dataset.

MENNDL, for example, homes in on a neural network’s optimal hyperparameters by assigning a neural network to each Titan node. As the supercomputer works through individual networks, new data is fed to the system’s nodes asynchronously, meaning once a node completes a task, it’s quickly assigned a new task independent of the other nodes’ status. This ensures that the 27-petaflop Titan stays busy combing through possible configurations.

To demonstrate MENNDL’s versatility, the team applied the algorithm to several datasets, training networks to identify subcellular structures for medical research, classify satellite images with clouds, and categorize high-energy physics data. The results of each application matched or exceeded the performance of networks designed by experts.

With the OLCF’s next leadership-class system, Summit, set to come on line this year, MENNDL will help scientists understand their data even more. “We’ll be able to evaluate larger networks much faster and evolve many more generations of networks in less time,” Young said

See also: